In this paper we introduce the Scalable AI Accelerator, short SAIA, a Slurm-native platform for operating services.

SAIA can be utilized for running any kind of service, i.e., an application that requires HPC resources, must be constantly kept running and accessible from the internet.

However, the most dominant use case for SAIA are AI services such as hosting LLMs, image generation models or other AI-based services.

Such services require the hosting of large AI models, which in turn require access to powerful GPU nodes that are only available in HPC environments or dedicated data centers.

The paper focuses on HPC environments that are managed via a batch scheduler such as Slurm and that are potentially already in usage for regular HPC workloads such as training AI models.

The architecture of SAIA includes a scheduler that operates on top of Slurm to ensure that the desired state of active service instances is always maintained.

It does so by regularly checking what services are running as Slurm jobs and launching service instances as new Slurm jobs to achieve the desired state.

As Slurm jobs are by default time limited and will expire and automatically close, this also applies to the services deployed via SAIA.

SAIA checks for when a given Slurm job running a service is about to expire and launches a replacement instance in time and ensures traffic is safely handed over from the old to the new instance.

HPC environments are often considered high-security environments as they host sensitive research data and high-end compute resources, which can be used for crypto mining.

As services are expected to be accessible from the public internet, the architecture of SAIA must provide appropriate security measures to ensure the safety of the HPC environment.

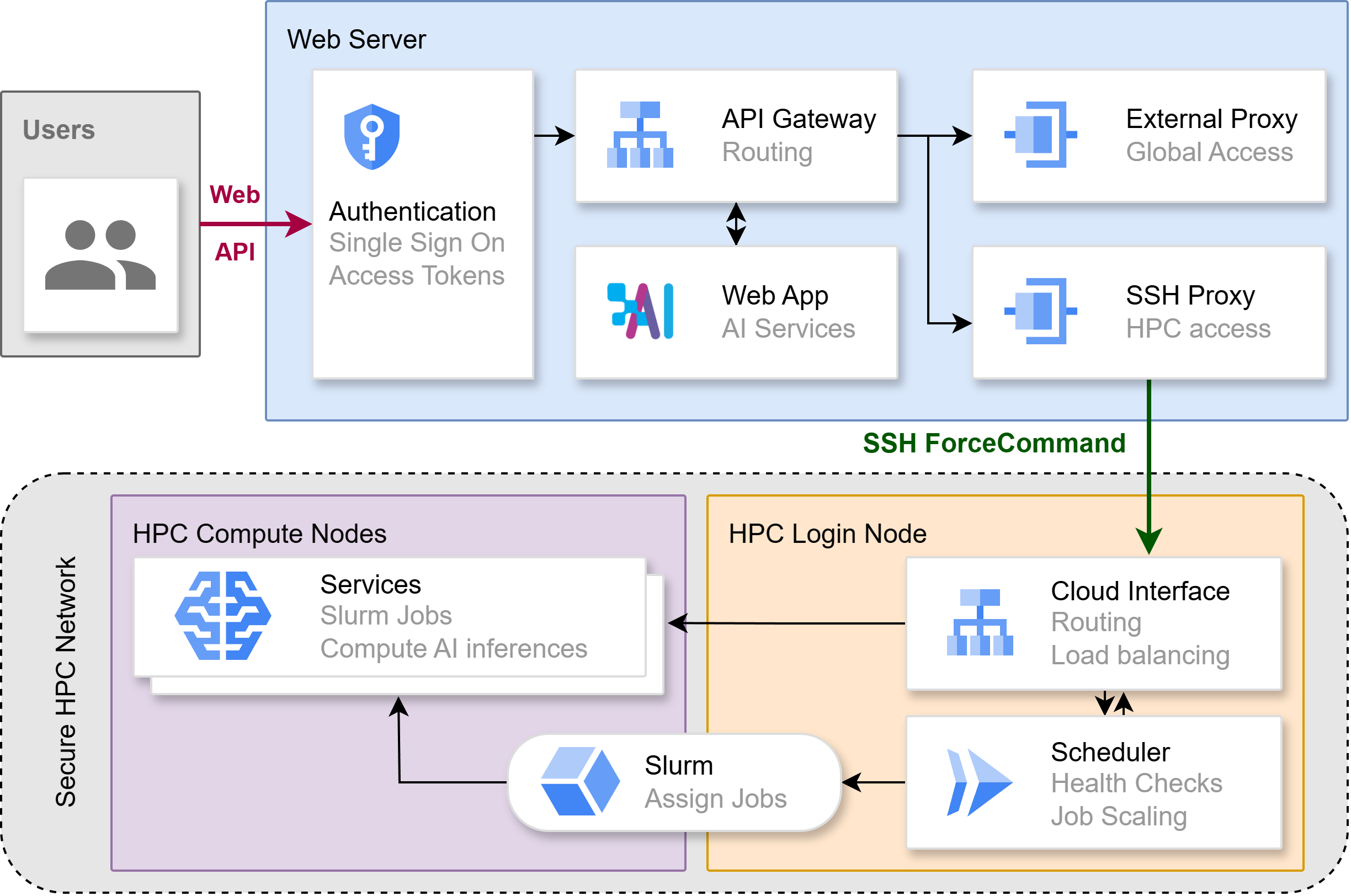

SAIA consists of two major environments that serve as a frontend and backend, respectively.

The backend operates on top of a Slurm-based HPC environment as described above while the frontend is to be installed on a private cloud.

The connection between the two environments is provided via an SSH-based tunnel that utilizes an SSH key that has limited access to the HPC environment through the SSH Force Command directive.

This directive enables setting a list of commands for an SSH key such that a user logged in with that key may only executed this set list of commands and no other commands.

This limitation is enforced by the SSH server such that an attacker that gains access to this key cannot add more commands to the list.

Assuming an attacker is able to exploit a web service on the private cloud and gain a foothold on it, due to the SSH tunnel with the limited key being the only entrance into the HPC environment, the attack is halted.

The paper further showcases a systematic study of the security measures taken as well as our benchmarking of the performance of the scheduler.

We employ SAIA to host among others Chat AI, which has earned a significant number of users as shown in the paper.

As part of the paper we provide the source code of SAIA and Chat AI.

In 2024 we had already released a paper about Chat AI and SAIA, which has since been replaced by our more recent paper focused on SAIA, which can be found here: https://doi.org/10.21203/rs.3.rs-6648693/v1